Update [6 Jan. 2024]: Decaf dataset is now available!

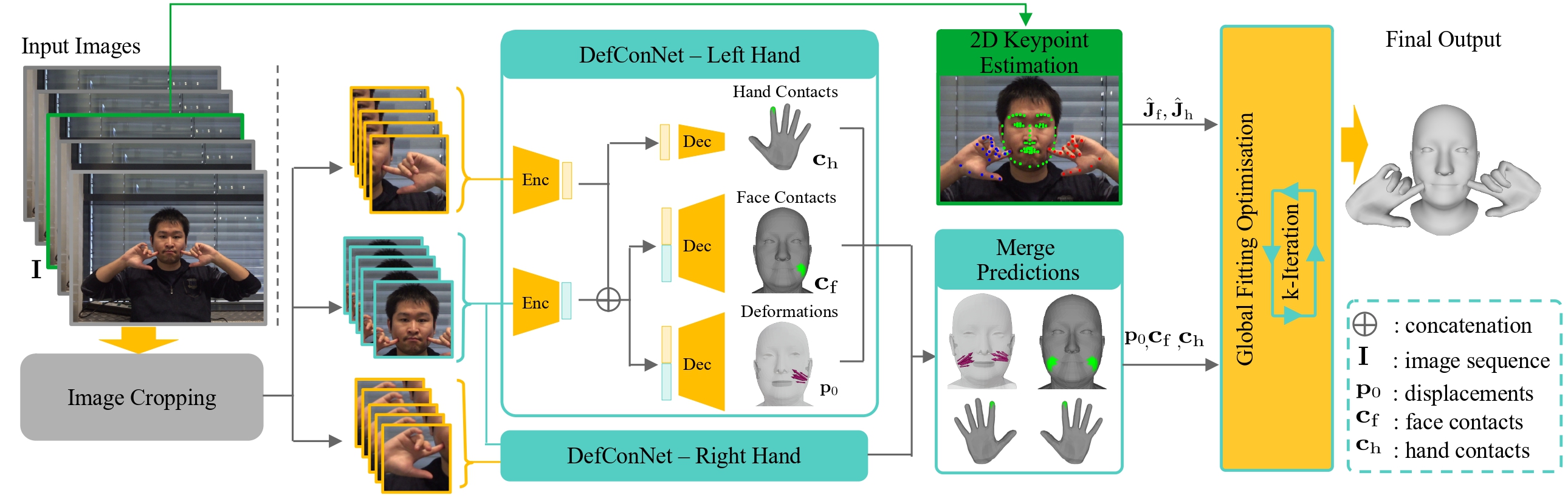

We introduce the first monocular motion capture method from a video that regresses 3D hand and face motions along with deformations arising from their interactions. We model hands as articulated objects inducing non-rigid face deformations during an active interaction. Our method relies on a new hand-face motion and interaction capture dataset with realistic face deformations acquired with a markerless multi-view camera system. As a pivotal step in its creation, we process the reconstructed raw 3D shapes with position-based dynamics and an approach for non-uniform stiffness estimation of the head tissues, which results in plausible annotations of the surface deformations, hand-face contact regions and head-hand positions. At the core of our neural approach are a variational auto-encoder supplying the hand-face depth prior and modules that guide the 3D tracking by estimating the contacts and the deformations. Our final 3D hand and face reconstructions are realistic and more plausible compared to several baselines applicable in our setting, both quantitatively and qualitatively.

@article{

DecafTOG2023,

author = {Shimada, Soshi and Golyanik, Vladislav and P\'{e}rez, Patrick and Theobalt, Christian},

title = {Decaf: Monocular Deformation Capture for Face and Hand Interactions},

journal = {ACM Transactions on Graphics (TOG)},

month = {dec},

volume = {42},

number = {6},

articleno = {264},

year = {2023},

publisher = {ACM}

}