Single-Shot Multi-Person 3D Pose Estimation From Monocular RGB

Abstract

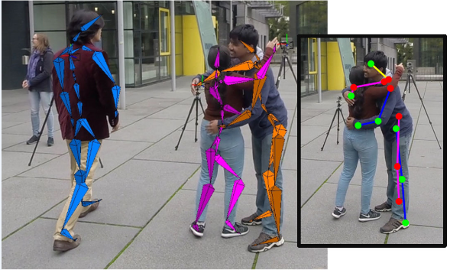

We propose a new single-shot method for multi-person 3D pose estimation in general scenes from a monocular RGB camera. Our approach uses novel occlusion-robust pose-maps (ORPM) which enable full body pose inference even under strong partial occlusions by other people and objects in the scene. ORPM outputs a fixed number of maps which encode the 3D joint locations of all people in the scene. Body part associations allow us to infer 3D pose for an arbitrary number of people without explicit bounding box prediction. To train our approach we introduce MuCo-3DHP, the first large scale training data set showing real images of sophisticated multi-person interactions and occlusions. We synthesize a large corpus of multi-person images by compositing images of individual people (with ground truth from mutli-view performance capture). We evaluate our method on our new challenging 3D annotated multi-person test set MuPoTs-3D where we achieve state-of-the-art performance. To further stimulate research in multi-person 3D pose estimation, we will make our new datasets, and associated code publicly available for research purposes.

Downloads

Citation

@inproceedings{singleshotmultiperson2018,

title = {Single-Shot Multi-Person 3D Pose Estimation From Monocular RGB},

author = {Mehta, Dushyant and Sotnychenko, Oleksandr and Mueller, Franziska and Xu, Weipeng and Sridhar, Srinath and Pons-Moll, Gerard and Theobalt, Christian},

booktitle = {3D Vision (3DV), 2018 Sixth International Conference on},

month = {sep},

year = {2018},

organization={IEEE},

url = {http://gvv.mpi-inf.mpg.de/projects/SingleShotMultiPerson}

}

Acknowledgments

This work is was funded by the ERC Starting Grant project CapReal (335545). We would also like to thank Hyeongwoo Kim and Eldar Insafutdinov for their assistance.

Contact

For questions and clarifications please get in touch with:Dushyant Mehta

dmehta@mpi-inf.mpg.de