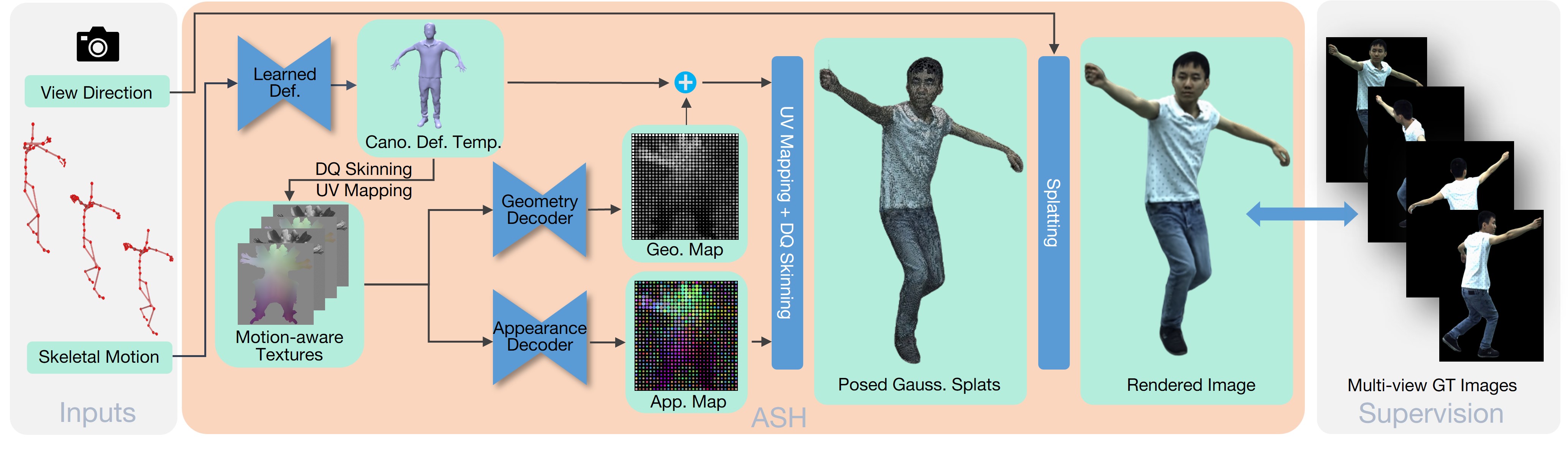

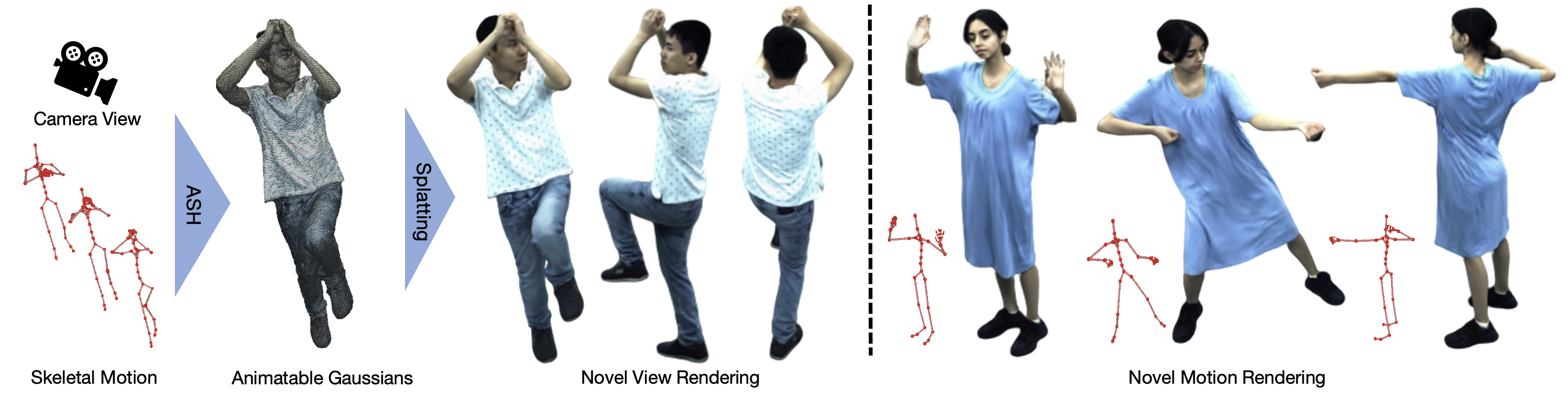

Figure. 1: ASH takes an arbitrary 3D skeletal pose and virtual camera view, which can be controlled by the user, as input, and generates a photorealistic rendering of the human in real time. To achieve this, we propose an efficient and animatable Gaussian representation, which is parameterized on the surface of a deformable template mesh.