EgoCap: Egocentric Marker-less Motion Capture

with Two Fisheye Cameras

Abstract

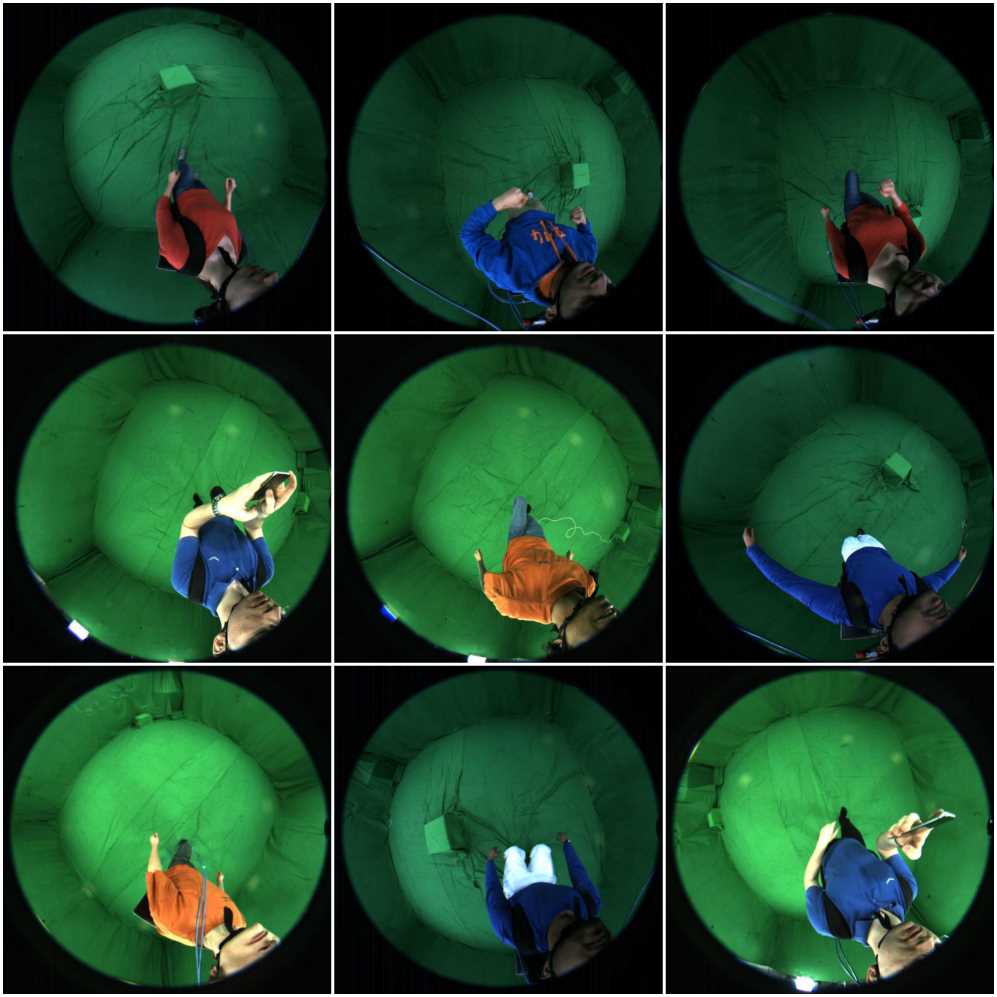

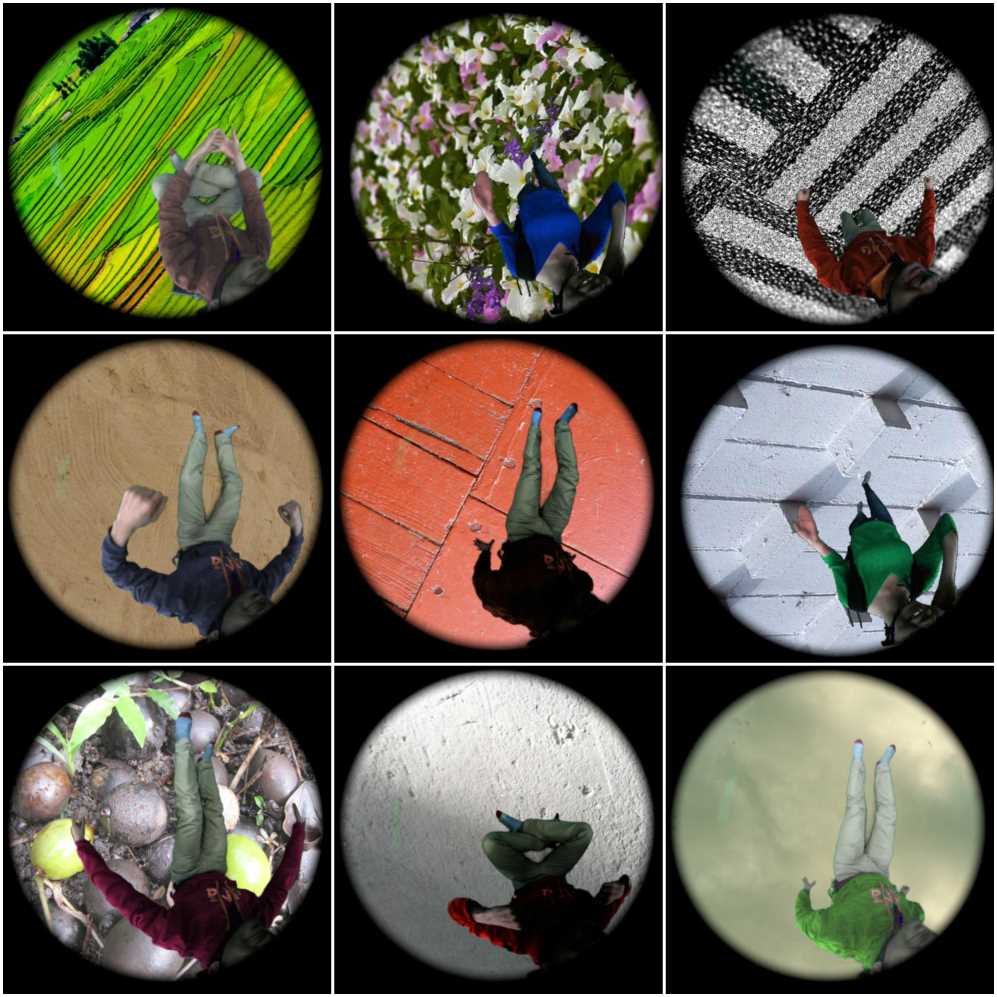

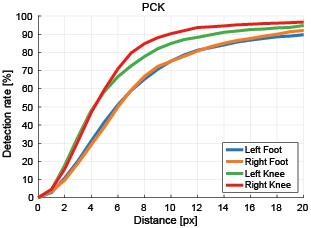

Marker-based and marker-less optical skeletal motion-capture methods use an outside-in arrangement of cameras placed around a scene, with viewpoints converging on the center. They often create discomfort by possibly needed marker suits, and their recording volume is severely restricted and often constrained to indoor scenes with controlled backgrounds. Alternative suit-based systems use several inertial measurement units or an exoskeleton to capture motion. This makes capturing independent of a confined volume, but requires substantial, often constraining, and hard to set up body instrumentation. We therefore propose a new method for real-time, marker-less and egocentric motion capture which estimates the full-body skeleton pose from a lightweight stereo pair of fisheye cameras that are attached to a helmet or virtual reality headset. It combines the strength of a new generative pose estimation framework for fisheye views with a ConvNet-based body-part detector trained on a large new dataset. Our inside-in method captures full-body motion in general indoor and outdoor scenes, and also crowded scenes with many people in close vicinity. The setup time and effort is minimal and the captured user can freely move around, which enables reconstruction of larger-scale activities and is particularly useful in virtual reality to freely roam and interact, while seeing the fully motion-captured virtual body.

Downloads

:

Terms of use

*The data we provide is meant for research purposes only and any use of it for non-scientific means is not allowed. This includes publishing any scientific results obtained with our data in non-scientific literature, such as tabloid press. We ask the researcher to respect our actors and not to use the data for any distasteful manipulations. If you use our data, you are required to cite the origin: Helge Rhodin, Christian Richardt, Dan Casas, Eldar Insafutdinov, Mohammad Shafiei, Hans-Peter Seidel, Bernt Schiele, and Christian Theobalt. Egocap: Egocentric marker-less motion capture with two fisheye cameras. ACM Transactions on Graphics (Proceedings SIGGRAPH Asia), 35(8), 2016Related Pages

Acknowledgments

We thank all reviewers for their valuable feedback, Dushyant Mehta, James Tompkin, and The Foundry for license support. This research was funded by the ERC Starting Grant project CapReal (335545).