LiveHand: Real-time and Photorealistic Neural Hand Rendering

Abstract

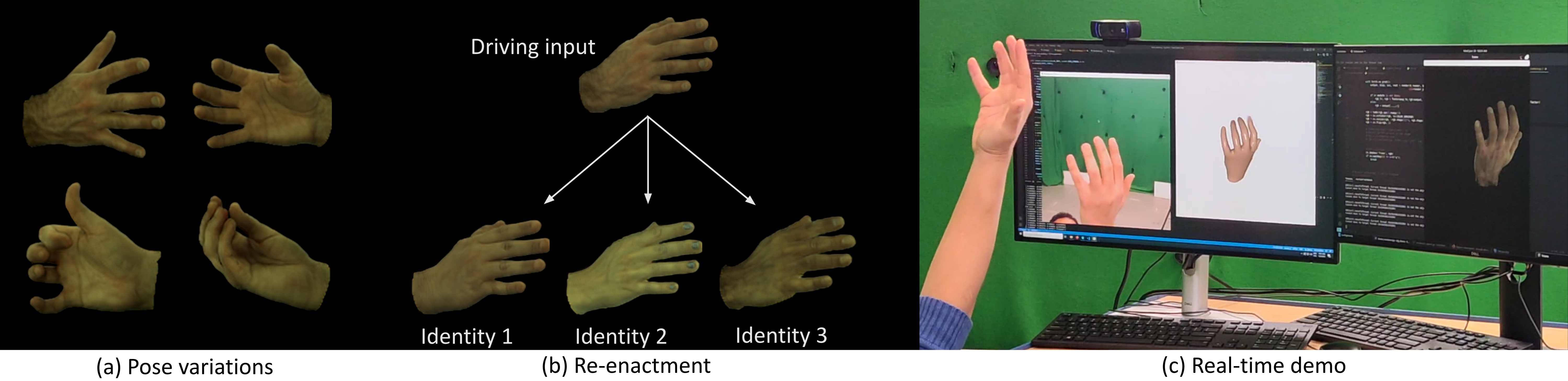

The human hand is the main medium through which we interact with our surroundings. Hence, its digitization is of uttermost importance, with direct applications in VR/AR, gaming, and media production amongst other areas. While there are several works modeling the geometry of hands, little attention has been paid to capturing photo-realistic appearance. Moreover, for applications in extended reality and gaming, real-time rendering is critical. We present the first neural- implicit approach to photo-realistically render hands in real-time. This is a challenging problem as hands are textured and undergo strong articulations with pose-dependent effects. However, we show that this aim is achievable through our carefully designed method. This includes training on a low- resolution rendering of a neural radiance field, together with a 3D-consistent super-resolution module and mesh-guided sampling and space canonicaliza- tion. We demonstrate a novel application of perceptual loss on the image space, which is critical for learning details accurately. We also show a live demo where we photo-realistically render the human hand in real-time for the first time, while also modeling pose- and view-dependent appearance effects. We ablate all our design choices and show that they optimize for rendering speed and quality.

Downloads

Citation

@misc{mundra2023livehand,

title = {LiveHand: Real-time and Photorealistic Neural Hand Rendering},

author = {Akshay Mundra and Mallikarjun {B R} and Jiayi Wang and Marc Habermann and Christian Theobalt and Mohamed Elgharib},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV)},

month = {October},

year = {2023},

} Acknowledgments

This work was supported by the ERC Consolidator Grant 4DRepLy (770784).